Are you someone that regularly uses the internet? Or who lives on planet earth? Then you’re probably going to want to pay attention to the Australian government’s social media ban that just went into effect, because even if you don’t live in Australia, this will likely impact how you use the internet, no matter where you live.

Hey folks, welcome to the first of many monologues. I’m going to be experimenting with the sort of content I’m putting out through A Modern Remedy, and regular solo episodes are something I’ve wanted to do for a while. This will also be published as a written piece on the site, a modern remedy dot com, and I’ll eventually be putting this out as a newsletter as well. Consider these monologues as a companion piece to the New Ways pod, even if it’s actually the other way around. I’m also still playing around with how I’m going to structure these and my evolving setup. Right now, my process involves lengthy research around a central thesis, which I then format into a script of around 5,000 words that I’m currently reading from. I might eventually move to a more, dot-point, free-flowing video style, and save these longer reads for audio only, or something like that, but let’s see how this goes for now. And no, ‘AI’ was not used in any part of the process here, not research, not ‘brainstorming’, not anything. I prefer not to outsource my cognition, among my many other issues with generative ‘AI’, but that’s a tale for another day. These are my thoughts, and my analysis, powered by organic intelligence.

The plan with these is to do some deeper analysis on important happenings in the world, and what better way to kick things off than evaluating this brand-new law that has the power to reshape how millions, if not billions, use the internet.

We’re going to go into where this ban came from, what it’s trying to do, why that’s likely not going to work, the unintended (or perhaps intentional) side effects that aren’t being talked about, what governments should be doing instead, and if this social media ban could indeed kick off a ripple effect felt right around the world.

The background on this ban

Okay, so let’s break down what this ban is, how it’s being implemented, and what this means for users of the internet, both in Australia and around the world. Now, despite vocal public and industry criticism, the Australian federal government has decided to press ahead with a social media ban that was first introduced to parliament back in 2024, before passing both houses with a majority vote. This is actually an amendment to an existing law, the Online Safety Act of 2021, and is officially known as the Online Safety Amendment Act. The original Online Safety Act is aimed at limiting the creation and distribution of harmful content. That law was a reaction to the limited powers the eSafety Commission had to do, well anything, about the sites that were continuing to host video of the horrendous Christchurch mosque shootings, and was later presented as a way to also tackle online harassment and bullying. Now in effect, this “ban” means that from the 10th of December, 2025, as in, 48 hours ago, social media platforms that operate in Australia will have to take “reasonable steps to prevent Australians under the age of 16 from creating or keeping an account”.

Obviously, you might be wondering, well what sites are we talking about here? So far, that list includes Facebook, Instagram, Kick, Reddit, Snapchat, Threads, TikTok, Twitch, X/Twitter and YouTube. All the usual suspects, and a few others. At one point, that list also included Discord, GitHub, Pinterest, Steam and WhatsApp, which, could be best described as a chat app, a code repository, a picture saving app, a video game store and, well, another chat app. This ‘banned list’ was whittled down after initial concerns around the proposed law being seen as too wide reaching and too broad in scope, along with continuing criticism around privacy, but more on all that in a moment.

Australia’s social media ban has been positioned as a way to improve the mental health of young people by limiting their access to social media platforms at a formative and precarious age. And despite claiming to be a world-first ban, Australia is not alone in their efforts to put something like this into place. In fact, the United Kingdom beat them to the podium by nearly two years. The UK’s Online Safety Act was passed in October of 2023, and similarly focuses on preventing access to content deemed harmful to children. Just like Australia’s new law, it does so in part by imposing age verification requirements on sites and services that fall within scope.

Likewise, the United States has tried to pass similar legislation, most notedly the Kids Online Safety Act, also known as “KOSA”, which failed to pass in 2022 and again in 2024. In the case of this law, both sides of the aisle had significant concerns around censorship, constitutional violations and potential court challenges on a state-by-state basis. And censorship is of particular interest as we take a look at Australia’s social media ban.

This isn’t the first attempt at online censorship

The next logical question is, where did all of this start? Well, the origins of Australia’s ban date back to a time before the US and UK’s attempts to block social media. In fact, the Australian government has been trying to lock down the internet for some time. For those of you not living here, I’ll forgive you for not knowing about the 2008 internet censorship debacle that happened. In fact, let’s call it what it really was – a shit storm. Back then, the Labor Government, who was in power at the time, decided to support a radical idea by the new Minister for Telecommunication, Mr Stephen Conroy. His grand plan was to stop harmful content by imposing an internet filter, which would be implemented by Internet Service Providers, Australia-wide.

Yes, you heard me right – an internet filter.

I’m going to spare you all the details here, but needless to say, it was incredibly unpopular. The list of sites that would have been filtered grew to include anything that “offended against the standards of morality”. In fact, the proposed ‘black-list’ was leaked to the public, and it hilariously contained many websites that were anything but morally questionable, including several small businesses, such as a dentist’s office in Queensland. You can only imagine that potential damage and lost revenue they would have faced had they have been wiped from the internet back in 2008, let alone in 2025.

Unfortunately, Conroy doubled down, continuing to bark demands to the media in an attempt to fortify his position. Yet this ultimately proved to be his undoing, and he soon found internet activists creating websites that mocked him, and eventually, after mounting public pressure and stiff opposition from other political parties, Labor withdrew the widely unpopular policy.

That said, had the proposed internet filter actually gone through and become law, it would have set the framework for increased government censorship and tighter control of the internet in the years that followed. A social media ban would have been a fast follow, and much easier to pass through parliament, given the earlier precedent.

Now, technically speaking, the filter does live on, albeit on a much smaller scale. In 2015 the Australian government passed into law an amendment to the Copyright Act of 1968, which eventually led to Australian ISPs being mandated to block a handful of popular torrenting websites, on the grounds of illegal copyright infringement. Seems like a fair and reasonable thing, right? Again, let’s call it for what it is – this is a form of internet censorship, and any form of enforced censorship starts to become a slippery slope of what is and isn’t allowable, depending on who’s in government and what their motives and agendas are. Keep that in that back of your mind as we discuss this new social media ban.

Why you should be concerned

Now, I bet many of you are thinking, well, I’m not in Australia, or, even if I was, I’m not 16 so I have nothing to worry about.

And you’d be right… if you weren’t wrong.

See, the thing about a ban, is that it impacts everyone, not just those it explicitly targets, and that’s especially true when you consider the means by which this ban is being implemented and upheld. The politicians and media might be calling it ‘age verification’, but what they really mean to say is, ‘identity verification’. Because this isn’t just about verifying your age, it’s about verifying who the potentially infringing account belongs to, and that can mean… well, any number of things. Because, the government is leaving it up to the platforms themselves to decide how they will go about verifying people’s ages. For some, like Meta, owners of Facebook and Instagram, suspect accounts may be required to upload a copy of their government ID to a third-party in order to verify they are over 16. Others, like Snapchat, will utilise Connect ID, a service that connects with a user’s bank account for verification. Then there’s YouTube, who stated in a recent update that they will “determine a user’s age based on the age associated with their Google account and other signals and will continue to explore how we implement and apply appropriate age assurance.” Those other methods could include uploading a facial scan and/or analysis of user behaviour and account activity, at the discretion and interpretation of the platforms themselves. Funny how on one hand, the government doesn’t trust social media platforms to regulate themselves, and on the other, they are entrusting them to verify the age of their users and safely capture and store the personal data used in that process. And I could put together a whole separate episode about the well-known issues of accuracy surrounding facial scan technology, but all I’ll say on that is – there will be a lot of false positives and false negatives for people approaching, or just passing, that 16 year old age threshold.

And whilst this ban might in theory be targeted at under 16 year olds, it will only be effective if everyone is verified. And that’s one of the main issues.

Think about driving a car. Every “legal” driver has a government issued driver’s license. The authorities don’t just hunt around for people they think are under the legal age to drive a car – each and every person, regardless of age, upon request, must display their license.

Another example that we’ve seen happen here in Australia is scanning of your ID when entering a nightclub. It used to be that the door staff, bouncer, whoever, would check your ID if they felt your were underage, or to hit their verification quota for the evening. These days it is not uncommon for every single person in line to have both their ID and face scanned before they allowed entry. And for those of you rightfully asking about data security, don’t worry, we’ll be returning to that in a moment.

The point here, is that whether it’s today, or tomorrow, under these new laws, you are likely going to have to provide some form of personally identifiable information in order to use a large chunk of the internet.

And again, to my overseas friends, if you don’t think that your government won’t try this on, you are kidding yourselves. It’s already passed in the UK and Australia, Canada is talking about it, and whilst the US attempts are currently stalled at the federal level, many states have already started enacting ID verification for accessing websites that are ‘adult in nature’. I think you know what I’m talking about there.

Who are we giving our data to?

All of this begs even more questions. Like, who are we actually giving our personally identifiable data to? Which companies are involved here? Are they foreign or domestic? How long will they keep our data for? What (if any) security regulations must they comply to? And how are they protecting our data from being hacked and stolen?

Actually, let’s focus in on that last part. We’ve already talked about the fact that the processes involved in the collection and verification of our personal data will be handled in a mish-mash and non-uniform way by each of the platforms that fall under this ban, and it looks as though by passing the onus onto the platforms themselves, the government has absolved itself of any sort of uniform data privacy and security standard as it relates to this law.

Now, this introduces two big problems.

The first, and more obvious, is with the data stores, or where our personal data will be housed. You’d be fooling yourself if you think those data stores won’t soon become the number one target of malicious actors looking to get their hands on that highly valuable data in order to hack into bank accounts, access sensitive information or be sold to data brokers and online advertising companies. Sure, having these stores located all around the world does introduce a level of decentralised security, as in, there isn’t one big digital vault to crack, but it also introduces the issue of creating more and more attack vectors. It’s like building a house with lots of rooms and hundreds of windows. All it will take is for one of those windows to be left open, even just a tiny bit, and the resulting data leak will make Australia’s recent (and massive) theft of private health data and theft of telecommunications customer data seem trivial in comparison.

The second issue is around data collection. With so many people required to legitimately verify their identity, there are bound to be a few illegitimate players who come to the forefront as well. I myself have already seen ads for “quick, easy and accurate” age verification services popping up on Reddit and elsewhere. And that’s not factoring in those who are under 16 and are desperate to stay connected with their friends online through social media, who, desperate and emotionally charged, will likely fall for phishing scams that promise to help them get around the ban. To them, their whole online world has come crashing down, and they will likely do whatever it takes, ironically risking their own personal information, to remain using these platforms.

Cutting kids out of the internet is child abuse

This ban will ultimately do more harm than good. In fact, I’d argue that banning social media and cutting kids out of the internet is child abuse. Yeah, you heard me. I realise that’s a very heavy statement to make, so let’s break it down.

First off, for those of you over the age of 16, I want you to cast you mind back to what it was like to being a teenager. It was a confusing time. Your life was changing, your body was changing, you were trying to navigate school, trying to fit in, testing out different social groups, looking for acceptance, balancing new rules and new freedoms and all the while trying to discovery who you were in the hopes of carving out an authentic identity.

That’s a lot of shit to have going on.

Thankfully, you likely had a community or two to fall back on. To give you support. To face all these questions and tough challenges and to help you with the stuff that you couldn’t ask your teachers or wouldn’t want to ask your family. You bonded with them, shared similar interests and explored all the promise of the world you were inheriting from the previous generation. Now, I can only speak for my own experience here, but I imagine that growing up as a minority, or an LGBTQ youth, or someone that just feels like an outsider, that having the internet and being able to connect with likeminded people from across the world must seem like an absolute lifeline. It would allow you to connect with and form communities with people who you feel a sameness with, to help you through these formative years and feel less alone in the world.

And this is why I, and many others, strongly feel that this ban will hurt the people it is supposed to protect.

Kids, all kids, need to find community, connection. Cutting them off is not the way to do it.

And look, I get it. There will be those of you who know someone that was cyber-bullied, consumed some graphic content, fell into a pit of anxiety due to social comparison, struggled with depression brought on by lengthy screen time, or worse. Some of you might be able to point to someone who is no longer with us, and that is a terrible pain and a sadness that you may never fully overcome. I’ve unfortunately had some experience with this, and I truly empathise. That said, I know that a blanket ban will not solve this issue. Banning kids from using social media won’t prevent online harassment, as bullying will continue to exist as long as people exist. It won’t stop the flood of harmful, algorithmically recommend content designed to foster engagement at all costs. There are much more effective strategies that we could be employing here, and we’ll get into that in a moment. But I just wanted to take a second and acknowledge the friends, family, parents and loved one of young people that are doing it tough or who have gone through tough times, seemingly due to their use of the internet. You deserve solutions, just as our young children deserve a level of care and protection from the ills of society. But this social media ban isn’t how we are going to get there.

And what of those kids in remote and rural towns who are already living in socially isolated conditions? By restricting their access to these platforms, they will lose connection to their friends that they’ve made from across Australia and beyond. Does the Prime Minister expect that these kids will hop on train, a plane or take a five our car ride to hang out with the social groups they’ve already forged? Australia is a vast and remote country, with a large divide between the city, suburbs and country towns, and this ban will only further that divide for our young people.

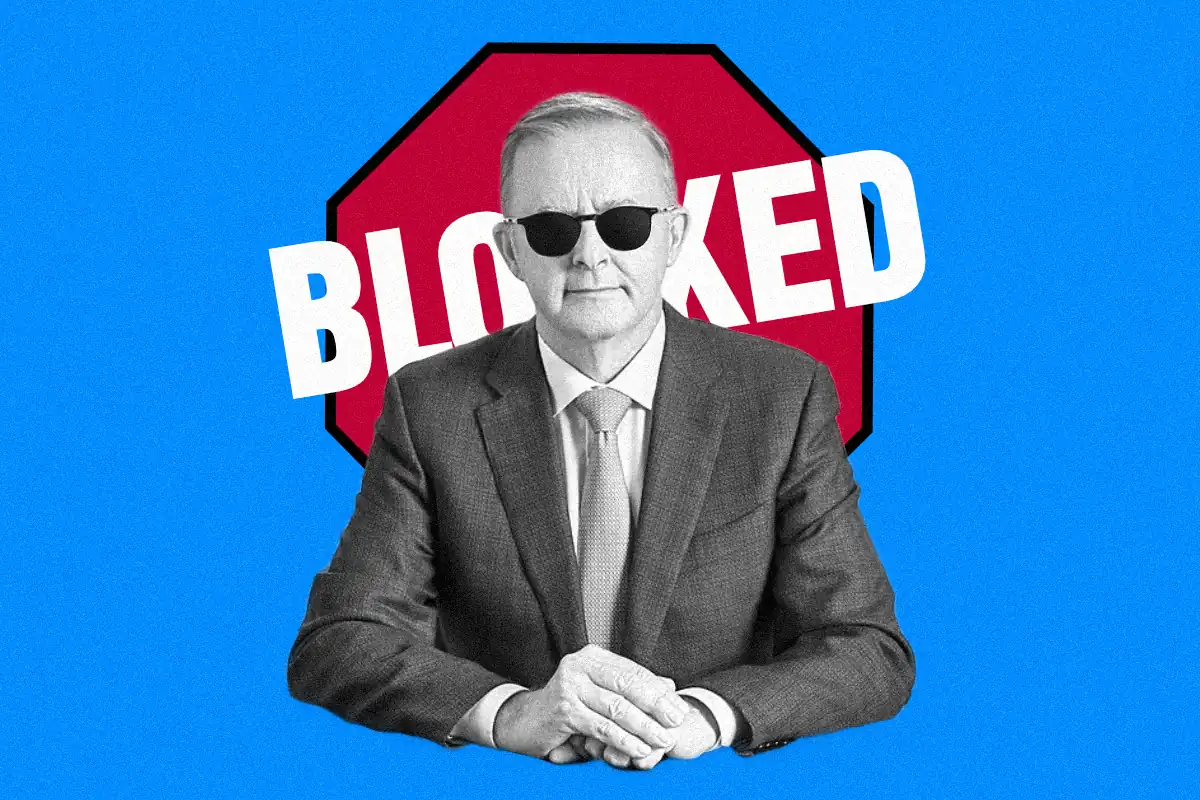

The Australian Prime Minister, Anthony Albanese, in a recent media interview, proudly claimed that this ban will “save young lives”, and stated earlier that he felt this ban could help young kids, quote, “to spend more time on the footy field or the netball court than their spending on their phones”. Now, I agree in principle to see that second statement become a reality, but blocking young people from communicating online is not the way you get that to happen. And it certainly won’t save lives. In fact, by cutting off young people’s ability to connect with their friends and their communities, you actually put them in danger. Think of the young person who may be experiencing abuse at home, who doesn’t yet feel comfortable to ask their teachers for help, or who doesn’t know what to do or how to even start dealing with that situation. They often turn to the internet for advice. In fact, there are entire sub-Reddit’s dedicated to that, like r/WhatShouldIDo, with over 1.3 million members. Every other day there are questions from young teens, asking for help on relationships, abuse and serious life challenges. The sub’s community will respond, upvoting the most helpful reply, a reply that is usually grounded in logic and will direct the person to ways in which they can improve, address, or in some cases safely escape, their current situation. They offer resources, a kind word, and a much needed sense of safety and support.

So, thank goodness that Reddit is now on the banned list, or these kids might have received some life-saving advice!

Actually, thank goodness that Reddit, YouTube among other platforms, have stated that they are planning on fighting this ban.

Who is to blame here?

Look. It might be easy to come to the conclusion that the platforms are one of the victims of the ban. But that misses the point entirely. And I’m also not the biggest fan of social media platforms in general, but I’m less of a fan of government mandated censorship and attempts to inhibit people’s ability to interact with one another, regardless of age.

The main problem isn’t that children are running around cyberbullying each other every night after school. Nor is it that the parents of said children aren’t “doing their job” by actively monitoring them 24/7. The problem isn’t parents at all… it’s the platforms. Or rather, the inescapable and harmful algorithmic content on these platforms.

Remember the Kids Online Safety Act that we spoke about earlier? The one in the US that keeps failing to progress due to censorship complaints? Well, it might shock you to hear that there’s a part of that bill that I actually agree with.

One of the key requirements of KOSA is that platforms would have to provide kids with the ability to opt out of personalised algorithmic recommendations, as well as limit features that “increase, sustain or extend the use” of the platform, such as auto-playing videos, platform rewards and similar mechanics.

Basically, make the platforms less predatory to young people, and stop pushing sensational content.

This, ladies and gentlemen, is the real solution. Or at least, part of it.

But, in order to make this actually work, and to avoid any of us having to fork over any more of our personal information, this needs to be enforced upon the platforms themselves, and not on their users. I’m talking about regulating the platforms, not just blocking access to them.

See, ever since the switch to algorithmically recommended content a few years ago, we’ve seen platforms prioritise and push the most sensational stuff one could imagine. Politically charged rhetoric, misinformation, disinformation, discriminatory discourse, hate speech, scammy products, conspiracy theories and the like. It’s amplified the bad actors and allowed dangerous voices to crowd out the experts. The uneducated and the grifters, joining an ever-growing chorus of content designed to grab your attention, keep you on the platform, scare you, and in some cases, make you part with your dollars in order to escape whatever “bad thing” these hucksters promote, which of course only they can help you with.

Then there are the other worrying effects of algorithmic-driven content that this ban was originally designed to curtail, such as the well documented negative impacts of platforms like Instagram, where young kids, young girls in particular, fall victim to extensive social comparison, leading to negative mental health outcomes, which are in some cases, fatal. Sure, in years gone by, when it was just you, seeing a feed of your friends and direct connections, one might get that pang of jealously when you’d see a picture or a video of one or two people on a picturesque holiday, or effortlessly rocking a six-pack, but it was equally easy to scroll past those images and filter them from your mind. Before algorithms, your feed was chronologically sorted, meaning you saw the most recent content first, and it was content of people you exclusively followed. But now, thanks to algorithms and a desire for these platforms to keep you hooked and fight for your precious attention, your feed will be flooded with content recommendations, from people or brands you don’t actively follow or even know. This is especially a problem if you engage with negative content. Linger a little too long on that holiday photo? Seems like that might be something you’re interested in, says the app. So here’s a hundred photos just like that. Enjoy!

Social media algorithms are going to keep on recommending things that you show an interest in, but also what it feels will keep you engaged with the platform you are on. After all, like any other business, these social media companies are trying to turn a profit, and they do by capturing your attention, growing their user base, and selling ads. Think of it like a car crash that you see on the highway. Chances are, if only for a second, you’ll sneak a look to see what’s going on there. It’s morbid, but it’s also part of our mental wiring. Somewhere deep inside our ancient lizard brain comes forth the instruction to gather whatever brief info we can on that potential source of danger. Now imagine if a social media algorithm was involved. It would notice you checking out that crash and say, hey, looks like you’re interested in car crashes. Well, here’s a bigger one. And you’d naturally look at that one. Great! Well, here’s an even bigger one. And a bigger one! How about a ten car pile-up? What about the most gruesome thing you can think about? You like that? Looks like you do!

Now, as someone who lives in a country with a huge culture of drink-driving and a very active and ongoing campaign against road fatalities, as well as having been in several car accidents, been thrown from a motorcycle, ran over by a bus and was in the middle of a three-car pile-up whilst on the way to a funeral, I am not in any way aiming to trivialise that issue. But rather, illustrate the point that social media algorithms can quickly warp an initial interest in a topic or piece of content by amplifying more and more extreme, controversial and emotionally charged content. And depending on the type of content, this can start to reframe how you see the world, shaping your sense of reality and altering how you act in society. You’ve only got to look at the polarised political climate in the US, that’s been widely fuelled by social media, and watch as this algorithmic experiment plays out in real-time.

And the proliferation of ‘AI’ slop on these platforms only accelerates the issue.

Hey, so… let’s do a fun little experiment just to prove the point. I want you to open up a browser on your computer or phone. Make sure it is InPrivate or Incognito mode. Go to YouTube, and if you aren’t already signed out, make sure to sign out of your account. Now, YouTube’s homepage should look rather blank. In fact, for me it says, “Try searching to get started. Start watching videos to help us build a feed of videos that you’ll love”.

Next, I want you to type in something wild into the search bar. It doesn’t have to be too batshit crazy to begin with. I’ve typed in ‘government conspiracies’. Then, I want you to click on the first three video results, one at a time, watching each for like 1 second, before backing out to the homepage and clicking on the next result. Do that three times.

After the third time, go back to the YouTube homepage by clicking or tapping on the YouTube logo.

Now, what does your homepage look like? You should have a page full of algorithmically recommended videos, despite only watching 3 seconds of content. I ran this test twice, from a blank homepage to one filled with videos, on a browser window on my PC as I wrote the script for this episode.

Here are the highlights from the home page, all of these video titles appeared within the first ten videos that were recommended, along with several ads, without scrolling further down the page.

The video titles I was recommended include:

- Every crazy conspiracy that turned out to be true, by a channel called “Trust me bro”

- 50 insane facts about the Nazi’s, with thumbnail depicting a carton Hitler, in full salute

- An ‘AI’-generated Hitler, in the style of a Pixar film, with the title, ‘Ranking best Sora ‘AI’ trailers #3’. That one has 1.8 million views, and showed up in both tests

- What the media has told us over the years – That has nearly 3 million views

- And, The creepiest internet mysteries that were finally solved. That one actually sounds interesting.

All of these videos had hundreds of thousands, if not millions of views. This is the content that YouTube thinks “I would love”. And that’s just the tip of the iceberg. Imagine I typed in something about losing weight, or vaccines, or politics. This is the problem that needs to be addressed. It’s not about blocking access to these platforms, it’s about the content that they promote.

I mean, we all know people who have lost themselves to bad takes that they first heard on social media. The cookers, the political-extremist nutjobs, the brain-rotted, doomscrolling plebs who have drunk the Kool-Aid and gone all in on hateful, misinformed, science-free “theories”. Events like COVID left a lot of people with too much time on their hands, leaving them vulnerable to falling down the brain-rot rabbit hole. And we’re talking about fully grown adults here who should definitely know better.

The other elephant in the room

An unfortunate, or possibly intended outcome of this ban is… control. Not just control over what kids see on the internet, but control over the internet itself. By blocking a big chunk of people from online discourse, you effectively silence their ability to participate in politics and activism. And then there are those who are going to refuse to verify themselves online, so count them out as well. And finally, if and when identity verification comes for us all, who will feel comfortable in voicing their opinions online, without the veil of anonymity? How long before online political discourse is deemed ‘morally harmful’, and added to a ban list?

And just taking a step back for a moment, does anyone else find it ironic that this ban kind of proves a point, unintentionally? As in, kids are taking to the internet to voice their concern that they will be silenced from online political discourse, and this ban, well, takes away their ability to participate in those political discussions. Through this ban, they lose a huge channel for political expression, for learning about and participating in political discourse, cutting them off from online political information and silencing their voices. This ban effectively renders them politically invisible.

Earlier I spoke of the concern around the wording of this social media ban, which drew criticism from security experts, privacy experts and government officials alike, due to how seemingly broad in scope it was, and you’ll be glad to know that it… still remains vague and wide-reaching.

For instance, a social media platform will be required to adhere to this ban if they meet the following conditions:

- if the, quote, sole purpose, or a significant purpose, of the service is to enable online social interaction between two or more end-users

- if the, quote, service allows end-users to link to, or interact with, some or all of the other end-users

- or, quote, the service allows end-users to post material on the service.

Sound like any sites you known of? Because to me that sounds like a lot of sites, if not a majority of the internet. I spoke about this exact issue, nearly a year ago on the New Ways end of year show. Even if a site, like Bluesky for example, with its near 40 million users, hasn’t made the first round of banned platforms, how long before it is deemed to fall in scope of this ban? Actually, I think Bluesky are prepared for that outcome, as hours before this law went into effect, the A Modern Remedy account on Bluesky was subject to age verification, all because I had set the ‘birthday’ of the account to be the day that we joined Bluesky.

As I said before, this is a very slippery slope.

Ultimately, the internet was designed to be a decentralised means of communication, owned by no one, a virtual land without borders. Open and free. And it should remain that way. Enforcing all of us to identify ourselves online removes the safety of anonymity. It prevents free speech. It limits the desire to learn, to satisfy curiosity, and to seek out like-minded communities.

Even though this analysis involves discussion of the government, I don’t intend for this to be about attacking any particular politically party or ideology, yet ironically, this ban may serve to harm the centre-left government that has brought it in. The Australian Labor party, the centre-left political party I’m referring to, have now presided over both the failed internet filter and this latest social media ban. Both are forms of digital censorship. What’s odd here is that left-leaning parties tend to skew younger, promote inclusive and open values, and draw support from an overall younger demographic. Yet it’s this very same demographic that is being targeted by this ban. It will be interesting to see how this plays out in the years to come and what impact it has on political preferences as these kids reach voting age.

What can we do about it?

So, what can be done about this ban? Well, for many of us, not much. I’ve already had my Bluesky and Spotify accounts, both of which are for business purposes, flagged for age verification. In both instances, I just had to update my age to match my own, instead of the date that I started AMR. You might be asking why I picked that date to begin with, and for me, it just felt more logical to represent the actual time that AMR had been in existence, that being a few years, instead of having an account that said it was decades old. Especially if that information was going to be public facing. So, far that’s all I’ve needed to do, but how long before another platform questions my age? And with this new law in place, there is nothing stopping them from requesting more personal information for verification.

Already there are reports that teens are employing various techniques to circumvent verification requests, including using alternative apps and VPNs. Now, I’m not going to outright suggest that you break the law, but as citizens in the US, UK and other countries have come to find out, there are very easy ways to get around the need to provide personal information to the government or a third-party in order to continue using these platforms.

However, that doesn’t mean these laws won’t have an impact in the short and long term. And there might actually be some good that comes out of this.

Firstly, the coming backlash to this could serve as a spark to help reignite the conversation around government censorship, but more importantly, questioning the increasingly powerful role that we’ve allowed big tech to play in our daily lives. This might also help to build a case against social media platforms that are driven by algorithmically recommended content.

And when this ban fails to make a real dent in mental health outcomes for our youth, we can start to shift the conversation to focus on what can actually help them, like taking steps to make these platforms safer, and prioritising the wellbeing of kids by making platforms more responsible for the content they allow, prioritise and promote.

Maybe then the government can a long overdue look into why so many young people are turning to ‘AI’ chat bots for companionship and mental health support, or what we are going to do about the incredibly pervasive online gambling ads, ads that also target young people, that are plastered all over the TV and internet.

Because, if we don’t send a clear signal to the governments that are enacting these bans that these are ineffective, unwanted and utterly obtuse, then they will continue to overreach, continue to censor and continue their march toward controlling the internet. Already the Australian Government is pressing ahead with new laws due to be enforced before the end of the year that will force search engines to apply similar verification methods, utilising photo ID checks, face scanning, or third-party verification methods for those looking to utilise the search services of Google and Microsoft. Combined with the social media ban, people here in Australia will start 2026 with will fewer digital rights and larger privacy concerns, all thanks to a misguided attempt at helping our most vulnerable citizens, the younger generation.

And for those of you thinking well, I’ll just use a VPN to get around these laws – think again. The US is already looking into ways that VPN traffic can be blocked from social media platforms, so as to close that workaround, and Australia isn’t far behind, with the eSafety Commissioner stating that they expect platforms will “stop users from using VPNs to pretend to be outside Australia”.

In the interim, I recommend you fight back. Support organisations like the Electronic Frontier Foundation, Digital Rights Watch and Fight For The Future, who are standing up for your right to a free, open and secure internet. One that is a force for good, not driven by profits and censorship. Spread awareness of the social media ban that’s happening here in Australia. Talk about it online. Read up on it. And for those of you in Australia, seriously reconsider voting for any political party that supports internet censorship.

So, in closing, this ban does little to address the real problems here. It targets children, instead of the multi-billion and multi-trillion dollar companies who profit off our attention through the use of algorithmically recommended content, content that is harmful not only to kids, but to the very fabric of society itself. This ban is an overreach, a violation of digital privacy and online freedoms, is potentially stifling to online discourse and may in fact accelerate the negative mental health outcomes that it is purported to curtail.

You shouldn’t have to have your face scanned to use the internet. You shouldn’t have to upload your government ID to chat with your friends. And you shouldn’t have to disclose sensitive personal information that may be easily stolen, leaked and misused… all just to watch a video online.

All the way back in 2024, months before this ban was formerly introduced to parliament, the Australian Prime Minister (or someone from his office) wrote a piece entitled, Blocking social media to the kids will save us all, when in actuality, this ban puts us all at risk. Our leading mental health organisations know it, as do local and international digital privacy organisations. And now, hopefully you do too. It’s time for the governments of the world to step up and take on Big Tech, that is, if they truly want to help protect not only our children, but the entire citizenry, from the harmful, hateful and horrendous algorithmically driven content. It’s time for regulation, for effective solutions, and not quick-fixes, or ineffective and overreaching censorship that misses the point entirely.